Abstract

Ensuring the safety, durability, and cost-effectiveness of road infrastructure maintenance requires accurate and efficient damage assessment. However, the heterogeneous nature of pavement deterioration, ranging from cracks and potholes to exposed aggregate areas, poses significant challenges for its analysis and classification. This study introduces a novel hybrid methodology that integrates advanced segmentation, feature extraction, and classification techniques to enhance the accuracy and robustness of road-damage detection. Unlike conventional approaches, the proposed method leverages Enhanced Fuzzy C-Means and vessel segmentation to achieve precise damage detection, thereby overcoming the limitations of traditional threshold-based techniques. For feature extraction, the method combines Mel-frequency cepstral coefficients and Local Binary Patterns to capture both frequency and textural characteristics and improve feature discriminability. Furthermore, Linear Discriminant Analysis optimizes dimensionality reduction, ensuring a compact yet highly informative representation of pavement conditions. The classification stage evaluates multiple approaches, including a Support Vector Machine (SVM) and Artificial Neural Network (ANN) for supervised learning, K-Means for unsupervised learning, and a Convolutional Neural Network (CNN) based on EfficientNetB7. 6464 road images were processed using the proposed methodology. The results show that SVM and ANN achieved F1-Scores above 90% owing to the quality of the extracted features. Additionally, K-Means obtained an F1-Score of 88%, outperforming EfficientNetB7, which achieved 87%, demonstrating the effectiveness of the proposed approach for segmentation and feature extraction. These findings highlight the advantages of integrating frequency-based and texture-based descriptors with advanced segmentation and classification strategies, ultimately contributing to more reliable and scalable pavement damage assessment systems.

Similar content being viewed by others

1 Introduction

Road infrastructure is a cornerstone of modern economies and societal well-being, enabling the seamless movement of people and goods, while directly influencing traffic safety, economic growth, and environmental sustainability. The continuous exposure of road surfaces to heavy traffic loads, climatic variations, and aging processes accelerates their deterioration, leading to structural failures such as cracks, potholes, and aggregate loss. Addressing this issue is crucial, as neglected road damage can escalate maintenance costs, compromise road safety, and disrupt economic activity. Consequently, the development of accurate and efficient road damage assessment methodologies is imperative to support timely and cost-effective maintenance strategies (Tamagusko et al., 2024; Tang et al., 2024; Wang et al., 2024a, 2024b, 2024c).

To address this challenge, automatic techniques for detecting and classifying pavement damage have emerged as an innovative solution. These technologies offer the capability to quickly and accurately analyze extensive road networks, overcoming the limitations of traditional methods. Through the use of advanced algorithms and image processing, these tools enable the prioritization of repairs in a more strategic and efficient manner, contributing to proactive management that maximizes the lifespan of roadways and enhances traffic safety (Liang et al., 2024; Tello-Cifuentes et al., 2024a, 2024b).

Given the complexity and variability of pavement damage, the integration of computer vision and machine learning has become a key approach for automating road condition assessments. These techniques facilitate the extraction of relevant visual features and development of predictive models capable of distinguishing between different types of deterioration. The following section provides an overview of these methodologies and highlights their roles in the automatic analysis of road damage.

2 Background

The information processing involved in the automatic analysis relies on a strategic combination of these techniques. Computer vision (CV) is used to identify and extract visual features from surfaces, such as textures and patterns associated with distinct types of damage. In turn, Machine Learning (ML) methods leverage these data to develop models that learn to recognize and classify various deteriorations with high accuracy. Figure 1 shows some of the different methods used for damage detection and classification.

The use of CV techniques has revolutionized the analysis of road surfaces (Cano-Ortiz et al., 2024). These techniques enable the processing of images captured by sensors and the identification of patterns and features that are difficult to detect using traditional methods. CV algorithms can extract relevant information such as textures, edges, and discontinuities present in the pavement, which are key indicators of damage (Zhang et al., 2024a, 2024b). The ability to analyze visual data with high precision is crucial for detecting cracks, deformations, and other structural defects, facilitating a more detailed and efficient assessment of road conditions. Among the different techniques of CV are techniques in the spatial domain such as: statistical thresholds, mathematical operators for edge detection, morphological mathematics, decomposition techniques in the frequency domain, and Fuzzy C-means:

-

Statistical thresholds are one of the conventional methods used in image processing. They consist of setting thresholds to separate regions of interest; these methods are sensitive to noise and depend on lighting conditions. (Ai et al., 2018; Akagic et al., 2018; Li et al., 2017; Wang et al., 2024a, 2024b, 2024c) used statistical thresholds for the detection of cracks in images.

-

Many of the operators for edge detection employ the calculation of the first and second derived from the gray levels of the image. These operators are sensitive to noise and can detect edges vertically and horizontally. (Aung & Kumwilaisak, 2023; Jo & Jadidi, 2020; Li et al., 2018; Nnolim, 2020; Wang et al., 2024a, 2024b, 2024c) used different operators such as: Prewitt, Canny, Roberts and Sobel for the detection of edges in images with deterioration.

-

Morphological mathematics is based on the collection and analysis of elements in the image, modifying the shape of objects through interaction with their neighboring pixels; eliminating noise and joining fragments to connect nearby objects. (Aung & Kumwilaisak, 2023; Nasrallah et al., 2024; Radopoulou & Brilakis, 2017; Wang et al., 2024a, 2024b, 2024c) used morphological mathematics as part of information processing.

-

The use of filtering techniques in the frequency domain allows the removal of variations and highlight characteristics of the information. The Wavelet Discrete Transform decomposes the image into different levels, allowing discrimination by textures of the image, which generates a better identification of the cracks (Ouma & Hahn, 2016). On the other hand (Zhou & Song, 2020) employed the Discrete Fourier Transform to eliminate noise and variations on the surface, preserving the edges of cracks.

-

Fuzzy C-Means (FCM) is a widely used segmentation algorithm in image processing, owing to its ability to handle ambiguity in region classification. Unlike traditional methods that rigidly assign each pixel to a single cluster, FCM assigns fuzzy membership to each pixel, indicating the degree to which it belongs to multiple clusters. In Bhardwaj et al., (2024), various techniques, such as Fuzzy C-Means Clustering (FCMC), Fuzzy Local Information C-Means Clustering (FLICM), and Histogram Equalization-based Fuzzy C-Means Clustering (MHFCM), were employed to effectively segment pavement damage.

ML techniques have had a crucial impact on enhancing the detection and classification of pavement damage (Sholevar et al., 2022). These techniques enable the analysis of large volumes of data and construction of models that learn to identify complex patterns associated with different types of deterioration (Milad et al., 2024). The combination of ML with image processing has facilitated a more robust and automated approach to address the problem of infrastructure preservation. Algorithms such as Artificial Neural Network (ANN), Support Vector Machine (SVM), Decision Tree (DT) and deep learning approaches have been used to improve classification accuracy, thereby optimizing decision making in road maintenance management:

-

ANN are information processing models consisting of nodes or neurons, connected by channels, that propagate the signal to the output and are optimized by the learning algorithm. This is an easy-to-use structured computational model, but its limitation is that training data may be overlearned; authors such as (Banharnsakun, 2017; Hoang & Nguyen, 2018; Tello-Cifuentes et al., 2024a, 2024b; Turkan et al., 2018; Wang et al., 2024a, 2024b, 2024c) have used neural networks for crack classification.

-

SVM are supervised learning algorithms, which rely on kernel function mapping and margin classification to generalize a training dataset decision limit (Hoang & Nguyen, 2018). Different authors have used SVM such as (Apeagyei et al., 2024; Mukti & Tahar, 2021; Pan et al., 2021; Wang et al., 2024a, 2024b, 2024c) who classified different impairments using this algorithm for multiple classes and two classes.

-

DT are algorithms of learning as a collection which through a classification tree predict the class. The models have a tree structure, where the leaves represent the class, and the branches represent the set of features that lead to that class. (Han et al., 2021; Karballaeezadeh et al., 2020; Milad et al., 2024; Radopoulou & Brilakis, 2017) have used decision trees for the classification of impairments.

-

The use of deep learning techniques such as Convolutional Neural Networks (CNN) have acquired considerable importance in recent years, given their good performance for the automatic detection of pavement failures. (Yang et al., 2020) used a convolutional network called pyramid and hierarchical boosting network (FPHBN) for pyramid-shaped crack detection. (Dai & Xia, 2023; Jiang et al., 2025; Ranjbar et al., 2021; Shakhovska et al., 2024; Zhang et al., 2024a, 2024b) employed transfer learning for pre-trained models of convolutional networks such as AlexNet, GoogleNet, SqueezNet, ResNet, DenseNet-201, YOLO and Inception-v3 used in crack detection.

2.1 Proposed methodology

Despite significant advancements in road damage detection, the existing methodologies still face critical limitations that hinder their practical application. Traditional image-processing techniques, such as threshold-based and edge-detection methods, struggle to accurately differentiate complex pavement distress patterns, resulting in high false detection rates (Zalama et al., 2014). Although machine learning and deep learning approaches have enhanced classification accuracy, their performance is often affected by variations in pavement textures, lighting conditions, and occlusions (Alnaqbi et al., 2024). Additionally, many studies rely solely on spatial domain features, overlooking frequency-based information that can improve feature discriminability (Zalama et al., 2014). To address these challenges, a new methodology is proposed for the detection and classification of pavement damage considering various available advanced pattern recognition techniques. This methodology strategically integrates vessel segmentation, fuzzy logic using Fuzzy C-Means, Local Binary Patterns (LBP) (Chen et al., 2022), Mel-frequency cepstral coefficients (MFCC) (Altayeb & Arabiat, 2024), and Linear Discriminant Analysis (LDA) (Rodriguez-Lozano et al., 2023). First, Enhanced Fuzzy C-Means (EnFCM) (Bhardwaj et al., 2024) was used to segment images into clusters, effectively handling the uncertainty and ambiguity at the boundaries of the damaged regions. Subsequently, Vessel segmentation was applied to accurately identify the affected areas. For feature extraction, MFCC is implemented to capture the relevant frequency information, whereas LBP describes the textural properties. Finally, LDA was employed to reduce the dimensionality to a two-dimensional vector, optimize class separation, and enhance classification accuracy. This combination of techniques in a hybrid approach enables a more robust and efficient analysis, effectively addressing the complexity of pavement damage and optimizing infrastructure maintenance. Additionally, classification using Artificial Neural Networks (ANN) (Wang et al., 2024a, 2024b, 2024c) and Support Vector Machines (SVM) (Yılmaz et al., 2024) demonstrated promising results, further validating the quality of the extracted features. The results showed that the quality of the extracted features enabled even unsupervised methods such as K-Means (Zhou et al., 2024) to achieve remarkable performance. This confirms the effectiveness of the proposed methodology in addressing complex pavement damage classification problems.

This methodology is based on integrating, for the first time, a systematic workflow that combines traditional and advanced techniques to tackle the pavement damage classification problem. The combined use of MFCC, LBP, and LDA for the structural representation of images, along with feature extraction and classification approaches, provides an efficient framework for optimizing the processing and analysis of complex images. Compared with approaches relying solely on deep networks, this methodology demonstrates that integrating traditional techniques can overcome the limitations of complex architectures, particularly in contexts involving heterogeneous and noisy data.

For the design and validation of the methodology, a dataset comprising real images of Colombian roads was used. These images capture the highly heterogeneous and challenging conditions of the roads, including various types of damage such as cracks, alligator cracking, areas with exposed aggregate, and severely deteriorated pavement. The critical state of many of these roads, with markedly irregular surfaces, presents significant challenges for the segmentation and classification techniques applied. This dataset stands out from commonly used ones, such as SDNET2018 (Dorafshan et al., 2018), by representing a more realistic and complex scenario aligned with the unique conditions of Colombian road infrastructure. Figure 2 shows the workflow of the proposed method.

2.2 Image acquisition

For image acquisition, data were collected in Cali, Colombia, using an Asus Pro K3500PC-L1187W laptop equipped with an Intel Core i7-11370H processor, 16 GB of RAM, 512 GB solid-state drive, and Nvidia RTX3050 graphics card. This laptop controls camera functions, including image capture and storage management. A ZED 2i stereoscopic camera was used to capture the data at different resolutions. Images were captured at 15 frames per second (FPS) while the vehicle moved at speeds between 20 and 30 km/h, minimizing motion blur while maintaining efficient coverage. The dataset consists of 1608 pairs of stereoscopic images (left and right), capturing different types of pavement damage, including cracks, alligator skin, and intact pavement, as well as a category labeled others, which include potholes, patches, repairs, and sinkholes. To ensure robustness against varying environmental conditions, images were collected at different times of the day, specifically from 11 a.m. to 1 p.m. and from 3 to 5 p.m., capturing diverse lighting conditions such as direct sunlight, partial shade, and diffused lighting (Tello-Cifuentes et al., 2024a, 2024b). Additionally, data augmentation techniques have been applied to enhance dataset diversity and improve model generalization. These included rotations in different directions, increasing the dataset to 6464 images, which were subsequently divided for model training, validation, and testing.

2.3 Damage detection

The methodology was implemented using Python programming language, following a systematic approach for detecting damage on paved surfaces through multiple phases. In the first phase, contrast enhancement techniques are used to emphasize visual features and improve image clarity. The second phase involved segmentation based on Enhanced Fuzzy C-Means, which divided the images into clusters while handling ambiguity and uncertainty at the boundaries between the damaged and undamaged regions. The third phase applied thresholding in conjunction with vessel segmentation to identify and highlight the damage edges. The fourth phase employed morphological mathematical operations to refine the segmented areas and eliminate noise. Finally, the fifth phase involves feature extraction using textural and frequency descriptors, capturing critical information from the images to support accurate class discrimination.

2.4 Image preprocessing

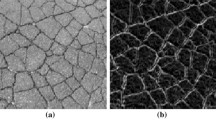

Contrast enhancement techniques have been employed to enhance the visual quality of images and to improve sharpness and contrast. This process accentuated features such as textures and edges associated with damaged regions, enabling clearer and more effective differentiation between deteriorated surfaces and intact pavements. The enhancement was implemented using Contrast-Limited Adaptive Histogram Equalization (CLAHE) to emphasize visual features and optimize image clarity. CLAHE functions by dividing the image into smaller regions or tiles and applying histogram equalization separately for each. By calculating a local histogram for every tile, CLAHE adaptively adjusts the contrast while limiting excessive enhancement by histogram clipping at a predefined threshold, thereby mitigating noise amplification and artifact creation (Pizer et al., 1990). Figure 3 shows the original image and the image with contrast enhancement.

2.5 Image processing

2.5.1 Enhanced Fuzzy C-Means

EnFCM was proposed by Szilagyi et al., (2003) initializes the cluster centers and iteratively updates them by minimizing the weighted sum of the distances between the pixels and these centers. During each iteration, membership values are recalculated based on the distances, and spatial information is integrated to ensure that the influence of neighboring pixels is considered, thereby enhancing the robustness of the segmentation against noise and outliers. The algorithm continues this process until convergence is reached, which is typically determined when changes in membership values or cluster center positions become insignificant.

where \(X=({x}_{1},{x}_{2},\dots ,{x}_{n})\) is an image with \(n\) pixels that need to be divided into \(c\) clusters, and \(\alpha \) is the parameter that controls the effect of neighboring pixels.

The objective function of the algorithm corresponding to the generated image \(\xi \) is defined as:

where \({u}_{il}\) is the membership degree of pixel \({x}_{i}\) in cluster \(l\), \({v}_{i}\) is the center of cluster \(i\),\({h}_{l}\) represents the number of pixels in the image \(\xi \) with a grayscale value equal to \(l\), and \({\xi }_{l}\) is the intensity value of the \({l}^{th}\) grayscale level. This is because the number of grayscale levels \((q)\) is generally smaller than the number of pixels \((N)\) in an image (Chen & Zwiggelaar, 2010).

2.5.2 Sato vessel segmentation

The segmentation of vessel-like structures is designed to enhance and extract tubular structures such as blood vessels, cracks, or elongated formations from images. Among the various vessel segmentation techniques, Sato vessel segmentation stands out for its robustness in detecting elongated structures. This method specifically focuses on enhancing tubular features by analyzing the curvature information of the image. The approach utilizes the Hessian matrix, which captures second-order variations in pixel intensity. The Sato method distinguishes crack structures from other shapes by computing eigenvalues of the Hessian matrix (Sato et al., 1998).

where \(x\) is a ratio that measures the "vessel-likeness" based on the eigenvalue, \({\sigma }_{x}, {\sigma }_{y}\) are parameters that control the sensitivity of the vessel measure to the eigenvalue ratios and structures, respectively. When \({\sigma }_{x}= {\sigma }_{y}\) can be regarded as an ideal line (Sato et al., 2000).

2.5.3 Mathematical morphological

Mathematical morphology provides a collection of operations based on set theory, lattice algebra, and topology. These operations are tailored to extract and modify image components such as boundaries, skeletons, and regions. Core morphological operations such as dilation, erosion, opening, and closing are pivotal for simplifying image structures while preserving essential features. This approach is effective in applications such as noise removal and shape analysis (Wang et al., 2024a, 2024b, 2024c). Figure 4 shows the process of filling empty spaces in the segmented image, which improves image continuity and eliminates gaps within the segmented regions.

2.5.4 Tensor voting

This method is based on the geometric representation of image structures using tensors, which encode local features like orientation and curvature. By propagating information through a voting process, Tensor Voting enhances the connectivity and coherence of fragmented in an image (Xing et al., 2018). At each point in the image, a local feature (such as an orientation) is represented using a second-order symmetric tensor:

where \({\lambda }_{1}\) and \({\lambda }_{2}\) are the eigenvalues, and \({e}_{1}\) and \({e}_{2}\) are the corresponding eigenvectors. The eigenvalues indicate the saliency of the structure in each direction, while the eigenvectors define the orientation.

Consider a smooth curve connecting the origin, \(O\), to a point,\(P\), with the normal vector, \(N\), at \(O\) given. The objective was to determine the most probable direction of the normal at point \(P\), as illustrated in Fig. 4. The hypothesize that the osculating circle connecting \(O\) and \(P\) provides the most likely connection because it preserves the consistent curvature along the circular arc. Thus, the most probable normal at \(P\) aligns with the normal to this circular arc, as indicated by the thick arrow in Fig. 5. This normal direction is constrained such that its inner product with \(N\) remains nonnegative. The magnitude of this normal, indicating the voting strength, diminishes with increasing arc length \(OP\), and is inversely proportional to the curvature of the circular arc (Jia & Tang, 2003; Xing et al., 2018). The voting field is defined as follows:

where \(s\) represents the arc length \(OP\), \(\kappa \) denotes the curvature, \(c\) is a constant that regulates decay in regions with high curvature, and \(\sigma \) controls the smoothness, which determines the effective neighborhood size. By evaluating all possible locations of \(P\) with nonnegative \(x\) coordinates in 2D space, the generated set of normal directions forms a 2D stick-voting field.

2.6 Feature extraction

Feature extraction is fundamental in image analysis because it captures the most relevant information for an effective and differentiated representation among various classes. There are several techniques for this purpose, including texture, shape, and frequency-based descriptors designed to identify significant patterns and structures. For instance, Local Binary Patterns describe textures by encoding the intensity relationships between neighboring pixels. Mel Frequency Cepstral Coefficients are used to represent the frequency distribution in the processed signals. To reduce the size of the feature vector without compromising its discriminative capacity, Linear Discriminant Analysis was employed, thereby optimizing the representation of data in a lower-dimensional space.

2.6.1 Local Binary Patterns

Local Binary Patterns are texture descriptors widely used in image analysis and pattern recognition. This method works by comparing each pixel in an image with its surrounding neighbors. Specifically, for each pixel, the LBP assigns a binary code based on whether the intensity of each neighboring pixel is greater or less than that of the central pixel. This binary pattern is then converted into a decimal value that characterizes the texture surrounding a pixel. By aggregating these values over an image region, LBP effectively captures textural features, making it highly suitable for tasks such as texture classification (Tajeripour et al., 2007; Zoubir et al., 2022).

where \(P\) is the total number of neighbors, \(R\) represents the circle radius, \({g}_{i}\) and \({g}_{c}\) represent the gray level of the center pixel and the neighboring pixels and \(s(x)\) is the binary value for the neighboring pixel \(x\), based on the comparison with the central pixel’s intensity.

2.6.2 Mel Frequency Cepstral Coefficients

MFCC is a known feature extraction technique primarily used in audio signal processing, especially for tasks like speech and music recognition. MFCC captures the frequency content of a signal and transforms it into a set of coefficients that compactly represent the spectral properties in a way that reflects human auditory perception. Although originally developed for audio analysis, MFCC has also been adapted for image-processing applications. By treating variations in image intensity as analogous to audio signals, MFCC can be used to analyze frequency-based patterns in the images (Liu et al., 2010).

The initial step in calculating MFCCs involves applying the Discrete Wavelet Transform (DWT) to convert the image into the frequency domain. Subsequently, to map these frequencies onto the Mel scale, the following formula is used:

where \(\xi \) is the Mel frequency corresponding to the actual frequency \(f\) in Hertz. The signal is filtered through a series of triangular filters spaced evenly on the Mel scale.

where \(f(n)\) denotes the frequency in Hertz. The logarithm is applied to each filter bank energy:

where the output energy for each filter \({F}_{P}(m)\) is computed as:

where \(P\left(n\right)\) is the magnitude. Finally, the Discrete Cosine Transform is applied to the log energies to obtain the MFCCs:

where \(m=\text{1,2},3,\dots ,M\), \(M\) es the number of Mel filters, and \({C}_{P}\left(\mathcal{k}\right)\) is the \(\mathcal{k}th\) ranges from 1 to the desired number of coefficients.

2.6.3 Linear Discriminant Analysis

Linear Discriminant Analysis is a technique used for dimensionality reduction and is particularly useful in classification problems. The central idea is to maximize the distance between different classes and minimize the dispersion within each class. To achieve this, LDA constructs a linear projection of the original data into a lower-dimensional space, in which the separation between classes is emphasized (Chen et al., 2019).

First, LDA computes intra- and inter-class scatter matrices. The intra-class scatter matrix evaluates how dispersed the data are within each class, whereas the inter-class scatter matrix measures how separated the classes are overall. LDA then seeks a linear transformation that maximizes the ratio of these two dispersions. This process ensures that the classes are projected in such a way that the variability between them is large compared with the variability within each class. By reducing the size of the feature vector, LDA not only improves computational efficiency but also simplifies the interpretation of the data (Nguyen et al., 2022; Samant & Adeli, 2000).

2.7 Damage classification

ML techniques have proven to be effective in identifying and differentiating various types of deterioration. Algorithms, such as K-Means, enable unsupervised data grouping. On the other hand, SVM are used to classify damage by establishing optimal boundaries between different classes based on features extracted from images. ANN offers more complex solutions by learning the nonlinear relationships between inputs and damage labels. With the advancement of these techniques, models such as CNN and more recent approaches such as EfficientNetB7 have increased the accuracy of damage detection. However, ML techniques remain essential in scenarios where simplicity and interpretability are crucial.

The selection of classifiers is guided by their effectiveness in addressing the challenges in pavement damage classification. K-Means was included as an unsupervised approach to explore the natural clustering of features, providing insights into the structure of the data without predefined labels. SVM was chosen for its ability to handle high-dimensional feature spaces and establish clear decision boundaries, making it particularly useful for distinguishing subtle differences between damage categories. ANN was incorporated because of its adaptability in learning complex patterns and its capability to generalize across different pavement textures. Finally, EfficientNetB7 was selected as a deep-learning-based classifier that optimally balances computational efficiency and classification performance by leveraging a compound scaling approach to enhance feature extraction. This combination allows for a comparative evaluation of traditional, shallow, and deep-learning classifiers, ensuring a robust assessment of their strengths and limitations in the context of pavement damage detection.

The dataset, comprising 6464 images, was balanced, with an equal number of images per class. It was divided into training and testing datasets. First, 70% of the images, equivalent to 4524 images, were allocated for training, whereas the remaining 30%, totaling 1940 images, were set aside for testing. From the 4524 images used for training, an additional split was made: 80% (3,619 images) were used for the actual training, and 20% (905 images) were used for validation. This division provides a structured approach to model development, ensuring sufficient data for training, validation, and testing.

To provide a clear understanding of the methodology, a summary of the parameter settings of the integrated methods is presented in Table 1. The EnFCM algorithm was configured with a fuzziness parameter of two and a maximum of 50 iterations. For vessel segmentation, a multiscale filtering approach was employed with scale parameters ranging from 1 to 25 to enhance the detection of elongated structures. In the classification stage, an ANN was implemented with one hidden layer, a learning rate of 0.0011, and an Elu activation function. In addition, the SVM was configured with a polynomial kernel of grade 2 and regularization parameter (C) of 2.66. A K-means classifier is also employed. These parameter settings were optimized through empirical evaluation and hyperparameter tuning to maximize classification performance across different pavement damage categories.

3 Result and discussion

The proposed methodology was designed to address the detection and classification of pavement damage through a systematic approach involving pre-processing, feature extraction, and classification. The dataset comprised images representing four main types of pavement conditions: Healthy Pavement, Crack, Alligator Crack, and Others. The Others class encompasses various types of damage such as potholes, patching, exposed aggregates and drains. Owing to the high variety and volume of information within this class, it was not feasible to divide it further into multiple subclasses. This approach was intended to capture and classify the diverse range of pavement damage effectively, while maintaining a balance between detail and model complexity. The dataset included both grayscale and color images to ensure a comprehensive representation of pavement conditions under different lighting scenarios to enhance the robustness and generalization of the model.

3.1 Damage detection

For damage detection, a set of image-processing techniques was employed to highlight the key features of the images, ensuring effective segmentation and characterization. Enhanced Fuzzy C-Means was used to cluster image regions based on intensity similarities, facilitating the identification of damaged areas. Additionally, the Sato Hessian-based filter was applied to enhance linear structures such as cracks and elongated features, which are critical in damage detection. The segmentation process incorporates vessel measures, effectively emphasizing tubular and crack-like patterns within the images. These techniques collectively enhance the clarity and separation of damage-related features, laying the groundwork for accurate analysis and classification. Figure 6 shows the process of Enhanced Fuzzy C-Means, thresholding using the Sato filter, and vessel segmentation.

The use of Enhanced Fuzzy C-Means is essential for clustering and aiding the differentiation of damaged regions within images. By separating clusters, fuzzy methods provide more nuanced segmentation, particularly in areas with ambiguous or overlapping features. When combined with the Sato vessel filter, fuzzy clustering enhances the definition of structures associated with damage, enabling a clearer and more precise delineation of affected areas. In addition, in regions where the Sato filter mistakenly segmented areas that were not actually damaged, fuzzy logic played a role in discriminating and excluding these false positives, further refining the segmentation process.

Mathematical morphology was applied to fill empty spaces within the segmented images, leveraging operations such as dilation and closing bridge gaps, and enhancing the continuity of damaged regions. Following this, tensor voting was utilized to address any remaining disconnections that the morphological process could not resolve, thereby providing a robust framework for connecting fragmented structures. Finally, isolated pixels, scattered noise, and circular elements were removed by eliminating irregular, nonconnected components, ensuring a cleaner and more precise representation of the detected damage. Figure 7 illustrates the process starting with the segmented image, followed by the application of dilation and tensor voting. This sequence effectively fills the gaps left by the morphological operations and eliminates unnecessary, false, or circular edges, thereby enhancing the final segmentation. Figure 8 provides an overview of the damage detection stage, showing the final segmented images for each class. This figure presents the original image, followed by the contrast enhancement, segmented image, and finally the image after the removal of the disconnected and circular components.

The implemented processing methodology enhanced the quality of the segmented images, ensuring a more accurate representation of the damage. The removal of circular elements, such as manhole covers, prevented irrelevant structures from interfering with the analysis, allowing the focus to remain on true damage areas. This improvement facilitated a clearer differentiation during the feature extraction stage, supporting a more robust characterization of the detected damage.

3.2 Feature extraction

The feature extraction phase plays a pivotal role in the proposed methodology because it enables the identification and capture of the most relevant characteristics for accurate damage classification. This stage utilized the segmented images obtained during processing, ensuring that the key damage features were effectively highlighted. The results of this phase illustrate how the extracted descriptors facilitate the differentiation of distinct patterns and structures associated with various types of damage, thereby providing a foundation for classification.

3.2.1 Local Binary Patterns

Local Binary Patterns were calculated from the thresholder images obtained using the Sato method. This process resulted in the generation of ten distinctive features per texture for each class, effectively capturing the local structural information and intensity relationships within the images. These features serve as a compact yet informative representation, enabling the differentiation of damage types by emphasizing the unique textural patterns inherent to each class.

The Mutual Information (MI) between each feature and the class labels was calculated. This measure evaluated the dependency between individual features and the target classes, providing insight into the discriminative power of each feature. The MI values obtained for the ten characteristics are shown in Fig. 9, represented as brown bars.

These results indicate that Feature 3 exhibited the highest dependency on the class labels, suggesting that it was the most informative feature for classification. Conversely, feature 2 showed the lowest MI value, indicating its limited contribution in distinguishing between classes. This analysis was essential for feature selection and dimensionality reduction as it guided the identification of the most relevant features for the subsequent processing stages.

3.2.2 Mel Frequency Cepstral Coefficients

The use MFCC, a technique commonly employed in audio signal processing, was adapted for feature extraction from images in this study. Traditionally, MFCCs have been applied to acoustic signals to represent their spectral characteristics, but in this case, their ability to capture frequency information from images was leveraged. To achieve this, DWT was first applied to the images, which allowed them to be transformed into the frequency domain and highlighted relevant features such as textures and distinctive patterns present in the processed images.

From the DWT, 13 MFCC coefficients were obtained and used as descriptors to represent each image. These coefficients represent 13 features that encapsulate the frequency variability present in the images, facilitating differentiation between the different classes of damage in the images. Although MFCCs are a standard approach in audio analysis, their application to images was successful because the wavelet transform allowed the most important features of the image to be highlighted, thereby improving the class discrimination capability during the feature extraction stage. In Fig. 9, the black bars represent the MI values calculated for the features obtained through MFCC.

The combined feature vector, consisting of 23 elements, was subjected to F-value analysis to quantitatively evaluate the relevance of each feature in distinguishing between the target classes. This statistical method calculates the ratio of variance between classes to that within classes for each feature. A higher F-value indicates a stronger ability of the feature to discriminate between classes, whereas a lower value suggests limited discriminatory power.

The results of the F-value analysis are detailed (Fig. 10). Among the features, feature 12 emerged as the most significant with an F-value of 1806.80, followed closely by Feature 13 (1804.46) and Feature 14 (1772.72). These high values suggest that the features capture the most distinctive patterns or attributes associated with the target classes. In contrast, features 2 (187.13) and 5 (343.98) demonstrated relatively lower F-values, indicating that their contribution to class differentiation was minimal. Despite their inclusion in the feature vector, these features may introduce noise or redundancy into the model.

The F-value results also highlight the contribution of the features derived from both LBP and MFCC. Notably, the top-ranked features predominantly belonged to the MFCC set, underscoring their effectiveness in capturing relevant characteristics from the transformed images. This suggests that the MFCC features, which are traditionally used in audio processing, provide valuable insights when applied to image data.

3.2.3 Linear Discriminant Analysis

The 21 most relevant features, selected based on their F-value ranking, were utilized as inputs for LDA. LDA is a supervised dimensionality reduction technique that aims to maximize the separation between different classes, while minimizing the variance within each class. By applying LDA, the original 21-dimensional feature vector is reduced to a two-dimensional vector. These two dimensions represent the most representative aspects of the data that contribute to distinguishing between the target classes. Specifically, the first feature captures the primary axis along which the classes are the most distinct, whereas the second feature further refines the separation by representing secondary discriminative features. This transformation not only simplifies the data but also allows for easier visualization of class separability, helping to better understand how effectively the extracted features differentiate between various classes.

Reducing the dimensionality to two components eliminates redundant or less informative features, preserving only those that contribute significantly to the classification. This reduction enhances the computational efficiency in the subsequent processing stages while maintaining the model’s ability to distinguish between classes, ensuring a balance between data reduction and discriminative performance.

Figure 11 (bottom-left) shows the relationships between the classes in the two-dimensional space obtained after applying LDA. This plot demonstrates that the extracted features had a good discriminative ability between classes. However, areas of overlap were identified between the Cracks, Healthy Pavement, and Others classes. In the case of the Healthy Pavement and Crack classes, the overlap was due to some Healthy Pavement images containing noise that was not completely removed during the processing stage. Additionally, certain vertical marks or linear elements, such as drain edges, cannot be fully removed, leading to features resembling those of the crack class. In the Others class, the overlap was associated with the heterogeneity of the objects and damage that made up this category, such as patches, potholes, and drains. The latter, especially in linear or fragmented forms, may have left segmented traces that could be mistaken for cracks. Furthermore, the diversity of elements within this class makes precise separation difficult, contributing to the observed overlap in the two-dimensional space.

Figure 12 illustrates the distributions of the Crack, Alligator Crack, Others, and Healthy Pavement classes. The Alligator Crack class (represented by orange squares) was distinguished by good differentiation, forming a compact group that was clearly separated from the other classes. The Healthy Pavement class (pink circles) also exhibited adequate separation, although there was a slight overlap with the others and Crack classes. The Crack class (green circles) showed some overlap with the others class (blue diamonds), which could be attributed to the shared characteristics in linear textures or residual noise in the image processing. The others class was more dispersed than the others, reflecting its heterogeneous nature, composed of a variety of objects and damages.

In the histograms, the Alligator Crack and Healthy Pavement classes had more concentrated distributions with well-defined peaks, suggesting that they were relatively homogeneous and better differentiated in these features. In contrast, the Crack and Others classes showed more dispersed distributions with tails and overlaps, indicating a higher internal variability within these classes. Overall, feature 1 provided greater discriminative power between the classes than feature 2, although neither feature alone achieved perfect separation, particularly for the Crack and Others classes.

Figure 12 presents six scatter plots illustrating the relationships between the class pairs in a two-dimensional space. The results revealed the following:

-

Crack vs. Alligator Crack: These classes exhibit clear separation. Although Crack has a broader distribution in space, it does not show significant overlap with the Alligator Crack, indicating that the features used are effective for distinguishing between them.

-

Crack vs. Others: Some degrees of overlap exist between these classes. Others showed greater dispersion, making differentiation more challenging in certain regions of space.

-

Crack vs. Healthy Pavement: A relatively clear separation is observed between these classes, although slight overlap occurs in some areas. While the Healthy Pavement forms a compact cluster on the right side, the Crack occupies a wider space.

-

Alligator Crack vs. Others: Alligator Crack displays a well-defined distribution, whereas others are more dispersed, with a minor overlap between the two classes.

-

Alligator Crack vs. Healthy Pavement: These classes are clearly separated in space, showcasing one of the most distinct differentiations among all class pairs.

-

Others vs. Healthy Pavement: Others are the most dispersed class, whereas Healthy Pavement forms a compact cluster. Despite this, a slight overlap between the two is evident.

Overall, the Alligator Crack and Healthy Pavement classes demonstrated strong differentiation in all plots, forming compact, well-defined groups. In contrast, the Crack and Others classes exhibit greater dispersion, posing additional challenges for separation owing to overlap and high internal variability, especially for the Others class.

3.3 Damage classification

Different approaches based on Machine Learning and Deep Learning techniques have been utilized for pavement damage classification. In the case of ML, methods such as K-Means, support vector machines, and artificial neural networks have been implemented. These models focus on the extraction and classification of specific features previously calculated from the processed images. On the other hand, for the Deep Learning approach, the EfficientNetB7 model was employed. This neural network, specialized in object detection and classification in images, enabled more automated and precise identification and classification of damage.

3.3.1 K-Means

The K-Means classification method was employed to group pavement images to identify patterns and organize data into different categories in an unsupervised manner. This approach relies on dividing images into clusters based on the similarity of their features, without requiring predefined labels. The algorithm assigns each image to a group whose centroid is closest and iteratively adjusts these centroids to minimize internal variation and maximize inter-cluster differences.

For pavements, the use of K-Means facilitated the identification of groups representing different types of damage or visual characteristics, thereby providing a valuable tool for data analysis and exploration. However, owing to its unsupervised nature, the performance of this method depends heavily on the quality of the extracted features and proper selection of the number of clusters. Figure 13 shows the cluster separation for each class. The results matrix (Fig. 14) indicated an overall accuracy of 88% and an average F1-Score of 88%, reflecting a good balance between precision and recall for the four image classes evaluated.

Crack class F1-Score: 82%, although it demonstrated good precision 80% its slightly lower recall 85% suggests that some elements of this class were misclassified into other categories. Alligator Crack class F1-Score: 96%; this class exhibits good performance, with high precision 100% and recall 92%, indicating very effective discrimination. Others class F1-Score: 82% similar to the Crack class; it showed moderate precision of 84% and recall of 79%, which may suggest some overlap or confusion with other classes. Healthy Pavement class F1-Score: 94%; this class stands out with high precision 91% and recall 98%, demonstrating consistent classification performance.

3.3.2 Support Vector Machine

SVM was employed for the classification of pavement damage, and the results indicated superior performance of the evaluated model on the test set, achieving an overall accuracy of 91% and an F1-Score of 91%. Table 2 presents the performance metrics of the model. The model demonstrated a robust performance, with the best results observed in the Alligator and Healthy Pavement classes. However, challenges arose in the Crack and Others classes. The Others class, which included a range of damage types and characteristics such as potholes, exposed aggregates, patching, and others, proved difficult to classify. The visual diversity within this class did not align with a uniform pattern, making it challenging for the model to accurately identify and classify it. The Crack class also posed a particular challenge: although it achieved a good precision of 80%, its recall was lower at 85%. This suggests that some elements that should have been classified as cracks were misclassified as other categories. This could be due to the similarities in features between cracks and other classes, such as edges or textures that resemble those of the Others or Healthy Pavement classes. Figure 15 shows the confusion matrix for the test data.

3.3.3 Artificial Neural Networks

ANN was implemented to classify pavement damage, leveraging its ability to learn intricate patterns from the extracted features and accurately categorize different types of damage. The training and validation accuracy curves illustrate convergence and stabilization around 90% after approximately 20 epochs. No significant overfitting was observed, as both curves remained closely aligned throughout the epochs, indicating a good generalization of the model (Fig. 16). Regarding the loss function, the training and validation losses decreased rapidly during the first ten epochs, as expected during the initial phase of training. After 20 epochs, both losses stabilized at low values (approximately 0.26). This behavior aligns with the accuracy graph and suggests that the model learns effectively, without overfitting (Fig. 17). Similarly, the Root Mean Squared Error (RMSE) for both training and validation decreased sharply within the first 10 epochs, mirroring the behavior of the loss function. After 20 epochs, it stabilized at approximately 0.17, indicating that the prediction errors were consistent and relatively low (Fig. 18).

The model achieved an F1-Score of 86% for the Crack class. A balanced combination of precision and recall was acceptable. This performance is linked to ambiguous data and overlaps during the feature-extraction stage, which hinders the differentiation of this class. In addition, certain linear elements, such as drain edges or vertical marks, were not completely removed, resulting in features that resembled cracks. For the Alligator Crack class, an F1-Score of 97% was obtained, indicating outstanding performance. The data associated with this category appeared to be clear and well represented in the training set, enabling precise and consistent classification. The Others class, with an F1-Score of 86%, reflects an acceptable performance. Overlaps in this class are attributed to the heterogeneity of its elements such as patches, potholes, and drains. In particular, drains with linear or fragmented shapes may have features that were misinterpreted as cracks. For the Healthy Pavement class, the model achieved an F1-Score of 95%, indicating that this class was well differentiated. The model effectively handled its features, suggesting that the data for this category were well-defined (Table 3).

The Alligator Crack and Healthy Pavement classes achieved metrics above 90%, representing the most distinct and well represented categories. In contrast, the Crack and Others classes exhibited slightly lower metrics, indicating some confusion with the other categories. These challenges could be attributed to issues during the feature extraction stage, such as the presence of inadequately removed elements and the intrinsic diversity of the Others class, which hinders precise separation in the feature space. These metrics were calculated using the confusion matrix shown in Fig. 19.

The results indicate that both the SVM and ANN achieved strong classification performance, with variations depending on the class. SVM obtained an overall accuracy of 91%, exceeding the Alligator Crack (F1-Score: 97%) and Healthy Pavement (F1-Score: 95%) classes, where well-defined textural patterns facilitated clear separability. However, it faced challenges in the Crack and Others classes because of feature similarities, achieving an F1-Score of 85% and 86%, respectively. ANN exhibited similar trends, with an F1-Score of 97% for Alligator Crack and 95% for Healthy Pavement, but slightly improved recall in the Crack class (87%), suggesting better handling of ambiguous cases. The Others class remains the most difficult to classify because of its heterogeneity, as it includes patches, potholes, and drains with linear elements that can resemble cracks. These results highlight that the ANN's ability to capture nonlinear relationships contributed to its better performance in ambiguous cases, while SVM excelled in structured patterns where feature separability was more distinct.

3.3.4 EfficientNetB7 CNN

The implemented convolutional neural network achieved overall performance with a loss of 0.33 and an average accuracy of 88%. However, the classification metrics revealed significant variations across the classes (Fig. 20). The Others class achieved the best results, with an F1-Score of 93%, owing to a solid combination of precision 90% and recall 96%, suggesting that the data for this class are well defined and well represented in the training set. In contrast, the Healthy Pavement class, although it reached 99% precision, exhibited a recall of 76%, indicating that many images of this class were not identified, which is reflected in an F1-Score of 86%. The Crack and Alligator Crack classes achieved F1-Scores of 82% and 89%, respectively, especially in the Crack class, which showed a low precision of 75%, likely due to overlap with other classes (see Table 4).

It is important to highlight that both the convolutional neural network and the artificial neural network were optimized using Optuna, which allowed the identification of appropriate hyperparameter configurations. Early stopping was implemented to ensure efficient training and to reduce the risk of overfitting. These optimizations contributed to stabilizing the performance of the models and maximizing their generalization capacity.

From Table 5, it can be observed that the ML techniques SVM and ANN achieved the best results, with metrics exceeding 90% in F1-Score, precision, recall, and accuracy. This performance highlights the robustness of these methods when applied to well-defined and linearly separable features such as those extracted in the preprocessing stage. The SVM classifier achieved a notable balance between precision and recall at 91%, demonstrating its ability to adapt to patterns present in images of pavement damage and healthy pavement. Similarly, the ANN maintained consistent metrics, reflecting its potential for modeling the nonlinear relationships present in the data.

In contrast, K-Means, as an unsupervised method, obtained an F1-Score of 88% and an accuracy of 88%, which is remarkable considering the absence of labels during training. These results suggest that unsupervised segmentation can be a valid tool in contexts where labeled data are limited, although its performance is slightly lower than that of the supervised methods. On the other hand, the EfficientNetB7 convolutional neural network, an advanced deep learning architecture, exhibited modest performance, with an F1-Score of 88% and an accuracy of 88%. Although these metrics are still strong, their lower performance compared to traditional methods indicates challenges in class discrimination. Possible causes of this limitation include the presence of overlaps between classes, particularly between the Crack and Others categories, and the heterogeneity of the elements within the Others class such as patches, potholes, and drainage edges.

The results demonstrate that the proposed methodology effectively enables processing and feature extraction from the images. By implementing techniques such as vessel and morphological mathematics, unwanted noise was removed, focusing on the analysis of regions of interest, such as cracks and other structural damage. Subsequently, feature extraction using methods such as LBP, LDA, and MFCC facilitated dimensionality reduction and the generation of robust descriptors, which efficiently fed classifiers. In the classification stage, the superiority of traditional methods, such as SVM and ANN, can be attributed to the quality and precision of the features extracted during the previous stage. The use of robust descriptors, such as those derived from MFCC and LBP, enables the creation of a discriminative feature space that facilitates classification. Thus, the proposed methodology not only optimized the data representation, but also mitigated the limitations associated with the intrinsic variability of the images, particularly in complex classes such as Others.

Traditional methods, such as SVM and ANN, outperform the convolutional owing to the quality and precision of the extracted features. Effective preprocessing followed by feature extraction generated a highly discriminative feature space, facilitated classification, and reduced the effect of noise in the images. In contrast, EfficientNetB7, operating on the original images, faced more complex and heterogeneous data, where unsegmented noise and class variability affected its performance.

To validate the effectiveness and generalizability of the proposed methodology, a comparative analysis was conducted using an external dataset (Özgenel, 2019). This dataset contained 1000 images of concrete with crack-type damage and 1000 images of healthy concrete, which were processed using the proposed methodology. This evaluation aimed to assess whether the methodology maintained its performance when applied to images from a different source, ensuring its robustness across diverse pavement textures and crack patterns. The results presented in Table 6 show that both the ANN and SVM classifiers achieved high values of Precision, Recall, and F1-Score when evaluated on the Özgenel (2019) dataset. Specifically, the methodology consistently achieved 100% precision in detecting cracks and healthy surfaces with only minimal variations in recall for the SVM classifier.

Although the Özgenel (2019) dataset is valuable for evaluating crack detection, it mainly focuses on a limited set of defects, specifically cracks in concrete surfaces. In contrast, the proposed methodology not only detects cracks, but also identifies other types of pavement damage, such as potholes, exposed aggregates, and repairs. This broader approach to defect classification highlights the versatility of the methodology for identifying various types of pavement deterioration. In addition, the use of advanced feature extraction techniques, such as vessel segmentation, fuzzy clustering, MFCC, and LBP, along with dimensionality reduction through LDA, provides a more detailed and accurate characterization of pavement damage, even when applied to a different dataset. In this sense, while the comparison with Özgenel (2019) is useful for evaluating the performance of the methodology, its ability to address a broader range of damage types and its enhanced defect recognition makes it a more comprehensive solution for pavement analysis in real-world environments.

4 Conclusions

The results demonstrate the effectiveness of the proposed methodology in processing and extracting relevant features, directly influencing classifier performance. SVM and ANN achieved the highest F1-Scores, 91%, underscoring the importance of well-defined and discriminative features extracted through methods such as LBP and MFCC. In contrast, EfficientNetB7 exhibited a more moderate performance, with an accuracy of 88% and an F1-Score of 87%, struggling with class overlap and the heterogeneity of the Others category. While the models with F1-Scores exceeding SVM and ANN demonstrate strong performance in terms of classification accuracy, selecting the most appropriate model for real-world applications requires a broader consideration of additional factors beyond raw performance metrics. These factors include interpretability, computational efficiency, and usability in a practical setting. Although the ANN model performs well, its complexity and resource requirements may limit its practical deployment in certain contexts. By contrast, the SVM model offers advantages in terms of ease of interpretation and faster training time, making it more suitable for environments with limited computational resources. Furthermore, models such as EfficientNetB7, although not performing as well with the current dataset, may be more beneficial in large-scale applications that require deep learning models with high capacity.

The hybrid methodology effectively integrates preprocessing, feature extraction, and classifier optimization techniques such as Optuna and EarlyStopping. This approach enhances the discriminative power of the feature space, thereby contributing to an improved classification performance. The observed classification errors were mainly attributed to the complexity of the data and variability in the damage patterns, highlighting the importance of robust preprocessing and optimization strategies. These findings suggest that the proposed methodology offers a structured framework for pavement damage classification, thereby addressing the key challenges associated with data heterogeneity.

Despite being traditionally associated with audio processing, the application of MFCC to images proved effective because of the prior use of the DWT. This adaptation was crucial because it allowed the relevant information of the image to be represented in a frequency format suitable for identifying patterns and textures that are not always visible in the spatial domain. The 13 MFCC coefficients extracted from each image were effective in representing the discriminative features of the processed images, contributing significantly to the classification of different damage classes.

The classification results revealed an overlap between the Crack and Others classes. This issue arises because of the presence of linear and fragmented elements in the Others category, such as potholes, exposed aggregates, and patching, which share visual similarities with the cracks. Although the implemented models demonstrated high overall performance, this overlap suggests the need for more refined feature extraction techniques to enhance class separability. Future work will focus on improving the feature representation by incorporating multi-scale analysis methods, such as wavelet transforms, to better capture structural differences. Additionally, integrating texture-based descriptors, such as LBP and advanced morphological operations, can enhance the distinction between these classes. Furthermore, exploring Non-Maximum Suppression (NMS) techniques can help refine the classification by reducing ambiguity in overlapping regions.

The validation of the proposed methodology using the Özgenel (2019) dataset reinforces its effectiveness and adaptability for crack classification. These results indicate that the feature extraction and classification techniques employed, including vessel segmentation, fuzzy clustering, MFCC, LBP, and LDA, are well suited for identifying pavement damage across different datasets. The strong performance of both the ANN and SVM models suggests that the methodology can be effectively applied to diverse pavement conditions.

The proposed methodology offers a structured and effective approach for detecting and classifying pavement damage and provides valuable insights into road maintenance operations. By integrating feature extraction techniques such as LBP and MFCC with optimized classification models, the methodology enhances the accuracy of damage identification, which is crucial for prioritizing repair and optimizing maintenance resources. Despite challenges, such as class overlap and data variability, the ability to distinguish between different types of damage demonstrates its applicability in real-world scenarios. Additionally, the methodology can be adapted to other infrastructure-monitoring tasks, such as bridge inspections, further expanding its impact. Future work will focus on improving the adaptability of the model to different pavement conditions and integrating it into automated inspection systems to facilitate large-scale road evaluation.

5 Competing Interests

The authors declare no competing interests.

Data availability

No datasets were generated or analysed during the current study.

Change history

24 December 2025

The original online version of this article was revised: The original version of this article unfortunately contained errors in two of the published figures. The incorrect figures were the result of an oversight during the final production process. Correction to Fig. 9 The figure originally published as Fig. 9 (Mutual information for features obtained) was not the final version intended for publication. The correct figure is provided below. Correction to Fig. 10 Similarly, Fig. 10 (F-values for Feature Selection) was inadvertently replaced with an outdated version. The correct figure appears below. These corrections do not affect the results, interpretation, or conclusions reported in the article. The authors apologize for these errors and any inconvenience caused.

29 December 2025

A Correction to this paper has been published: https://doi.org/10.1007/s10994-025-06958-z

References

Ai, D., Jiang, G., Siew Kei, L., & Li, C. (2018). Automatic pixel-level pavement crack detection using information of multi-scale neighborhoods. IEEE Access, 6, 24452–24463. https://doi.org/10.1109/ACCESS.2018.2829347

Akagic, A., Buza, E., Omanovic, S., & Karabegovic, A. (2018). Pavement crack detection using Otsu thresholding for image segmentation. In 2018 41st international convention on information and communication technology, electronics and microelectronics (MIPRO) (pp. 1092–1097). https://doi.org/10.23919/MIPRO.2018.8400199

Alnaqbi, A. J., Zeiada, W., Al-Khateeb, G., Abttan, A., & Abuzwidah, M. (2024). Predictive models for flexible pavement fatigue cracking based on machine learning. Transportation Engineering, 16, Article 100243. https://doi.org/10.1016/j.treng.2024.100243

Altayeb, M., & Arabiat, A. (2024). Crack detection based on mel-frequency cepstral coefficients features using multiple classifiers. International Journal of Electrical and Computer Engineering (IJECE). https://doi.org/10.11591/ijece.v14i3.pp3332-3341

Apeagyei, A., Ademolake, T. E., & Anochie-Boateng, J. (2024). Hybrid transfer learning and Support Vector Machine models for asphalt pavement distress classification. Transportation Research Record. https://doi.org/10.1177/03611981241239958

Aung, H. T., & Kumwilaisak, W. (2023). Unsupervised crack segmentation with candidate crack region identification and graph neural network clustering. In Proceedings of the 13th international conference on advances in information technology (pp. 1–6). https://doi.org/10.1145/3628454.3631581

Banharnsakun, A. (2017). Hybrid ABC-ANN for pavement surface distress detection and classification. International Journal of Machine Learning and Cybernetics, 8(2), 699–710. https://doi.org/10.1007/s13042-015-0471-1

Bhardwaj, M., Khan, N. U., & Baghel, V. (2024). Fuzzy C-Means clustering based selective edge enhancement scheme for improved road crack detection. Engineering Applications of Artificial Intelligence, 136, Article 108955. https://doi.org/10.1016/j.engappai.2024.108955

Cano-Ortiz, S., Lloret Iglesias, L., Ruiz, M., del Árbol, P., Lastra-González, P., & Castro-Fresno, D. (2024). An end-to-end computer vision system based on deep learning for pavement distress detection and quantification. Construction and Building Materials, 416, Article 135036. https://doi.org/10.1016/j.conbuildmat.2024.135036

Chen, Z., & Zwiggelaar, R. (2010). A modified fuzzy c-means algorithm for breast tissue density segmentation in mammograms. In Proceedings of the 10th IEEE international conference on information technology and applications in biomedicine (pp. 1–4). https://doi.org/10.1109/ITAB.2010.5687751

Chen, C., Seo, H., Jun, C. H., & Zhao, Y. (2022). Pavement crack detection and classification based on fusion feature of LBP and PCA with SVM. International Journal of Pavement Engineering, 23(9), 3274–3283. https://doi.org/10.1080/10298436.2021.1888092

Chen, D.-W., Miao, R., Yang, W.-Q., Liang, Y., Chen, H.-H., Huang, L., Deng, C.-J., & Han, N. (2019). A feature extraction method based on differential entropy and linear discriminant analysis for emotion recognition. Sensors, 19(7), 7. https://doi.org/10.3390/s19071631

Dai, D., & Xia, P. (2023). Efficient and lightweight spatial attention-based shallow CNNs with superior performance for concrete surface crack detection. Journal of Physics: Conference Series, 2637(1), Article 012020. https://doi.org/10.1088/1742-6596/2637/1/012020

Dorafshan, S., Thomas, R. J., & Maguire, M. (2018). SDNET2018: An annotated image dataset for non-contact concrete crack detection using deep convolutional neural networks. Data in Brief, 21, 1664–1668. https://doi.org/10.1016/j.dib.2018.11.015

Han, H., Deng, H., Dong, Q., Gu, X., Zhang, T., & Wang, Y. (2021). An advanced Otsu method integrated with edge detection and decision tree for crack detection in highway transportation infrastructure. Advances in Materials Science and Engineering, 2021, Article e9205509. https://doi.org/10.1155/2021/9205509

Hoang, N.-D., & Nguyen, Q.-L. (2018). A novel method for asphalt pavement crack classification based on image processing and machine learning. Engineering with Computers, 35(2), 487–498. https://doi.org/10.1007/s00366-018-0611-9

Jia, J., & Tang, C.-K. (2003). Image repairing: Robust image synthesis by adaptive ND tensor voting. In 2003 IEEE computer society conference on computer vision and pattern recognition, 2003. proceedings (vol. 1, pp. I-I). https://doi.org/10.1109/CVPR.2003.1211414

Jiang, T.-Y., Liu, Z.-Y., & Zhang, G.-Z. (2025). YOLOv5s-road: road surface defect detection under engineering environments based on CNN-transformer and adaptively spatial feature fusion. Measurement, 242, Article 115990. https://doi.org/10.1016/j.measurement.2024.115990

Jo, J., & Jadidi, Z. (2020). A high precision crack classification system using multi-layered image processing and deep belief learning. Structure and Infrastructure Engineering, 16(2), 297–305. https://doi.org/10.1080/15732479.2019.1655068

Karballaeezadeh, N., Mohammadzadeh, S. D., Moazemi, D., Band, S. S., Mosavi, A., & Reuter, U. (2020). Smart structural health monitoring of flexible pavements using machine learning methods. Coatings, 10(11), 11. https://doi.org/10.3390/coatings10111100

Li, S., Cao, Y., & Cai, H. (2017). Automatic pavement-crack detection and segmentation based on steerable matched filtering and an active contour model. Journal of Computing in Civil Engineering, 31(5), 04017045. https://doi.org/10.1061/(ASCE)CP.1943-5487.0000695

Li, W., Huyan, J., & Tighe, S. L. (2018). Pavement cracking detection based on three-dimensional data using improved active contour model. Journal of Transportation Engineering Part B: Pavements, 144(2), 04018006. https://doi.org/10.1061/JPEODX.0000028

Liang, G., Liu, W., & Li, W. (2024). Road crack detection algorithm based on improved YOLOV5 model. In 2024 IEEE 2nd international conference on sensors, electronics and computer engineering (ICSECE) (pp. 43–47). https://doi.org/10.1109/ICSECE61636.2024.10729401

Liu, D., Wang, X., Zhang, J., & Huang, X. (2010). Feature extraction using Mel frequency cepstral coefficients for hyperspectral image classification. Applied Optics, 49(14), 2670–2675. https://doi.org/10.1364/AO.49.002670

Milad, A., Ali, A. A., & Izzi Md Yusoff, N. (2024). Multi-classification machine learning algorithm for predicting pavement maintenance treatments. In 2024 IEEE international conference on automatic control and intelligent systems (I2CACIS) (pp. 30–34). https://doi.org/10.1109/I2CACIS61270.2024.10649874

Mukti, S. N. A., & Tahar, K. N. (2021). Low altitude multispectral mapping for road defect detection. Geografia-Malaysian Journal of Society and Space, 17(2), 2.

Nasrallah, A. A., Abdelfatah, M. A., Attia, M. I. E., & El-Fiky, G. S. (2024). Positioning and detection of rigid pavement cracks using GNSS data and image processing. Earth Science Informatics, 17(2), 1799–1807. https://doi.org/10.1007/s12145-024-01228-3

Nguyen, A., Long Nguyen, C., Gharehbaghi, V., Perera, R., Brown, J., Yu, Y., & Kalbkhani, H. (2022). A computationally efficient crack detection approach based on deep learning assisted by stockwell transform and linear discriminant analysis. Structures, 45, 1962–1970. https://doi.org/10.1016/j.istruc.2022.09.107

Nnolim, U. A. (2020). Automated crack segmentation via saturation channel thresholding, area classification and fusion of modified level set segmentation with Canny edge detection. Heliyon, 6(12), Article e05748. https://doi.org/10.1016/j.heliyon.2020.e05748

Ouma, Y. O., & Hahn, M. (2016). Wavelet-morphology based detection of incipient linear cracks in asphalt pavements from RGB camera imagery and classification using circular Radon transform. Advanced Engineering Informatics, 30(3), 481–499. https://doi.org/10.1016/j.aei.2016.06.003

Özgenel, Ç. F. (2019). Concrete crack images for classification. 2. https://doi.org/10.17632/5y9wdsg2zt.2

Pan, Y., Chen, X., Sun, Q., & Zhang, X. (2021). Monitoring asphalt pavement aging and damage conditions from low-altitude UAV imagery based on a CNN approach. Canadian Journal of Remote Sensing, 47(3), 432–449. https://doi.org/10.1080/07038992.2020.1870217

Pizer, S. M., Johnston, R. E., Ericksen, J. P., Yankaskas, B. C., & Muller, K. E. (1990). Contrast-limited adaptive histogram equalization: Speed and effectiveness. In [1990] Proceedings of the 1st conference on visualization in biomedical computing (pp. 337–345). https://doi.org/10.1109/VBC.1990.109340

Radopoulou, S. C., & Brilakis, I. (2017). Automated detection of multiple pavement defects. Journal of Computing in Civil Engineering, 31(2), 04016057. https://doi.org/10.1061/(ASCE)CP.1943-5487.0000623

Ranjbar, S., Nejad, F. M., & Zakeri, H. (2021). An image-based system for pavement crack evaluation using transfer learning and wavelet transform. International Journal of Pavement Research and Technology, 14(4), 437–449. https://doi.org/10.1007/s42947-020-0098-9

Rodriguez-Lozano, F. J., Gámez-Granados, J. C., Palomares, J. M., & Olivares, J. (2023). Efficient data dimensionality reduction method for improving road crack classification algorithms. Computer-Aided Civil and Infrastructure Engineering, 38(16), 2339–2354. https://doi.org/10.1111/mice.13014

Samant, A., & Adeli, H. (2000). Feature extraction for traffic incident detection using wavelet transform and linear discriminant analysis. Computer-Aided Civil and Infrastructure Engineering, 15(4), 241–250. https://doi.org/10.1111/0885-9507.00188

Sato, Y., Nakajima, S., Shiraga, N., Atsumi, H., Yoshida, S., Koller, T., Gerig, G., & Kikinis, R. (1998). Three-dimensional multi-scale line filter for segmentation and visualization of curvilinear structures in medical images. Medical Image Analysis, 2(2), 143–168. https://doi.org/10.1016/S1361-8415(98)80009-1

Sato, Y., Westin, C., Bhalerao, A., Nakajima, S., Shiraga, N., Tamura, S., & Kikinis, R. (2000). Tissue classification based on 3D local intensity structures for volume rendering. IEEE Transactions on Visualization and Computer Graphics, 6(2), 160–180. https://doi.org/10.1109/2945.856997

Shakhovska, N., Yakovyna, V., Mysak, M., Mitoulis, S.-A., Argyroudis, S., & Syerov, Y. (2024). Real-time monitoring of road networks for pavement damage detection based on preprocessing and neural networks. Big Data and Cognitive Computing, 8(10), 10. https://doi.org/10.3390/bdcc8100136

Sholevar, N., Golroo, A., & Esfahani, S. R. (2022). Machine learning techniques for pavement condition evaluation. Automation in Construction, 136, Article 104190. https://doi.org/10.1016/j.autcon.2022.104190

Szilagyi, L., Benyo, Z., Szilagyi, S. M., & Adam, H. S. (2003). MR brain image segmentation using an Enhanced Fuzzy C-Means algorithm. In Proceedings of the 25th annual international conference of the IEEE engineering in medicine and biology society (IEEE Cat. No.03CH37439) (Vol. 1, pp. 724–726). https://doi.org/10.1109/IEMBS.2003.1279866

Tajeripour, F., Kabir, E., & Sheikhi, A. (2007). Fabric defect detection using modified Local Binary Patterns. EURASIP Journal on Advances in Signal Processing, 2008(1), 1. https://doi.org/10.1155/2008/783898

Tamagusko, T., Gomes Correia, M., & Ferreira, A. (2024). Machine learning applications in road pavement management: a review. Challenges and Future Directions. Infrastructures, 9(12), 12. https://doi.org/10.3390/infrastructures9120213

Tang, H., Zhou, D., Zhai, H., & Han, Y. (2024). RPD-YOLO: a pavement defect dataset and real-time detection model. IEEE Access, 12, 159738–159747. https://doi.org/10.1109/ACCESS.2024.3488522