Parallelize R Code Using Apache Spark

R is the latest language added to Apache Spark, and the SparkR API is slightly different from PySpark. SparkR’s evolving interface to Apache Spark offers a wide range of APIs and capabilities to Data Scientists and Statisticians. With the release of Spark 2.0, and subsequent releases, the R API officially supports executing user code on distributed data. This is done primarily through a family of apply() functions. In this Data Science Central webinar, we will explore the following: ●Provide an overview of this new functionality in SparkR. ●Show how to use this API with some changes to regular code with dapply(). ●Focus on how to correctly use this API to parallelize existing R packages. ●Consider performance and examine correctness when using the apply family of functions in SparkR. Speaker: Hossein Falaki, Software Engineer -- Databricks Inc.

Recommended

More Related Content

What's hot (20)

Similar to Parallelize R Code Using Apache Spark (20)

More from Databricks (20)

Recently uploaded (20)

Parallelize R Code Using Apache Spark

- 1. Parallelize R code Using Apache Spark Hossein Falaki @mhfalaki

- 2. About me • Former Data Scientist at Apple Siri • Software Engineer at Databricks • Started using Apache Spark since version 0.6 • Developed first version of Apache Spark CSV data source • Worked on SparkR & Databricks R Notebooks • Currently focusing on R experience at Databricks

- 3. What is SparkR An R package distributed with Apache Spark: • Provides R front-endto Apache Spark • Exposes Spark DataFrames (inspired by R and Pandas) • Convenient interoperability between R and Spark DataFrames robust distributed processing, data source, off- memory data dynamic environment, interactivity, +10K packages, visualizations +

- 5. SparkR architecture (2.x) Spark Driver JVM Worker JVM Worker DataSources JVMR RBackend R R R R

- 6. IO read.df / write.df / createDataFrame / collect Caching cache / persist / unpersist / cacheTable / uncacheTable SQL sql / table / saveAsTable / registerTempTable / tables Overview of SparkR API http://spark.apache.org/docs/latest/api/R/ ML Lib glm / kmeans / Naïve Bayes Survival regression DataFrame API select / subset / groupBy / head / avg / column / dim UDF functionality (since 2.0) spark.lapply/ dapply / gapply / dapplyCollect

- 7. SparkR UDF API spark.lapply Runs a function over a list of elements spark.lapply() dapply Appliesa function to each partition of a SparkDataFrame dapply() dapplyCollect() gapply Appliesa function to each group within a SparkDataFrame gapply() gapplyCollect()

- 8. spark.lapply Simplest SparkR UDF patter For each element of a list 1. Sends the function to an R worker 2. Executesthe function 3. Returns the result of all workers as a list to R driver spark.lapply(1:100, function(x) { runBootstrap(x) }

- 9. spark.lapply control flow RWorker JVMR Driver JVM 1. serialize R closure 4. transfer over local socket 7. serialize result 2. transfer over local socket 8. transfer over local socket 10. transfer over local socket 11. de-serialize result 9. Transfer serialized closureover thenetwork 3. Transfer serialized closureover thenetwork 5. de-serialize closure 6.Execution

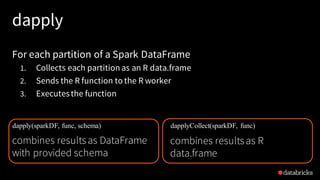

- 10. dapply For each partition of a Spark DataFrame 1. Collects each partition as an R data.frame 2. Sends the R function to the R worker 3. Executesthe function dapply(sparkDF, func, schema) combines resultsas DataFrame with provided schema dapplyCollect(sparkDF, func) combines resultsas R data.frame

- 11. dapply control & data flow RWorker JVMR Driver JVM localsocket cluster network localsocket input data ser/de transfer result data ser/de transfer

- 12. dapply control & data flow RWorker JVMR Driver JVM localsocket cluster network localsocket input data ser/de transfer result data ser/de transferresult deserialize

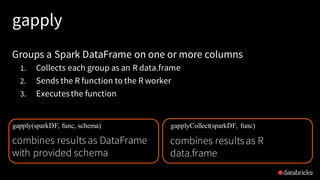

- 13. gapply Groups a Spark DataFrame on one or more columns 1. Collects each group as an R data.frame 2. Sends the R function to the R worker 3. Executesthe function gapply(sparkDF, func, schema) combines resultsas DataFrame with provided schema gapplyCollect(sparkDF, func) combines resultsas R data.frame

- 14. dapply control & data flow RWorker JVMR Driver JVM localsocket cluster network localsocket input data ser/de transfer result data ser/de transferresult deserialize data shuffle

- 15. gapply dapply signature gapply(df, cols, func, schema) gapply(gdf, func, schema) dapply(df, func, schema) user function signature function(key, data) function(data) data partition controlled by grouping not controlled

- 16. Parallelizing data • Do not use spark.lapply() to distribute large data sets • Do not pack data in the closure • Watch for skew in data – Are partitions evenlysized? • Auxiliary data – Can be joined with input DataFrame – Can be distributed to all the workers

- 17. Packages on workers • SparkR closure capture does not include packages • You need to import package son each worker inside your function • You need to install packages on workers – spark.lapply() can be used to install packages

- 18. Debugging user code 1. Verify code on the driver 2. Interactively execute code on the cluster • When R worker fails, Spark Driver throws exception with R error text 3. Inspect details of failure of failed job in Spark UI 4. Inspect stdout/stderrr of worker

- 19. Demo

- 20. Thank You